Deep Dive into Understanding Large Language Models: A Vital Guide

Unlock the future of business with LLMs, explained simply! Dive into the article to discover the revolution.

Hello! Kevin Wang here. By day, I'm a devoted product manager, but come evening, I'm the force behind a Gen-AI Tool - tulsk.io. My mission? Guide you through the startup world, spotlighting user experience, product management, and growth strategies to fuel your venture. Keen to dive deeper? Join the tulsk.io community. Your support is invaluable and ensures I can keep sharing insights for budding entrepreneurs like you. Let's journey together!

Today’s Insights:

Transformational Impact:

LLMs, like ChatGPT and Google's Bard, are revolutionizing businesses with enhanced accuracy, efficiency, and personalization.

Their true potential is unlocked through fine-tuning, allowing for tailored solutions that meet specific business needs.

This positions companies at the forefront of AI innovation, ensuring longevity in a dynamic market.

Efficiency and Resource Conservation:

Fine-tuning enhances the base capabilities of LLMs, making them more resource-efficient than building models from the ground up.

Harnesses the extensive knowledge within LLMs, saving time, and computational effort, and reducing the need for extensive training data.

Competitive Advantage:

Fine-tuned LLMs provide businesses with actionable insights, crucial for decision-making.

In a data-driven business world, these precise insights offer a significant competitive edge.

Companies leveraging fine-tuned LLMs streamline operations and solidify their position in the AI-driven landscape.

Large Language Models are becoming a hot topic (check out Figure 1 for proof). Thanks to this, we're seeing lots of new websites and tools. ChatGPT got a bunch of new users in January 2023, showing people are really into this. And not to be left out, Google launched something similar called Bard in February 2023.

For businesses, these models can:

Simplify tasks

Be cost-effective

Personalize things for users

Improve accuracy.

What is LLM?

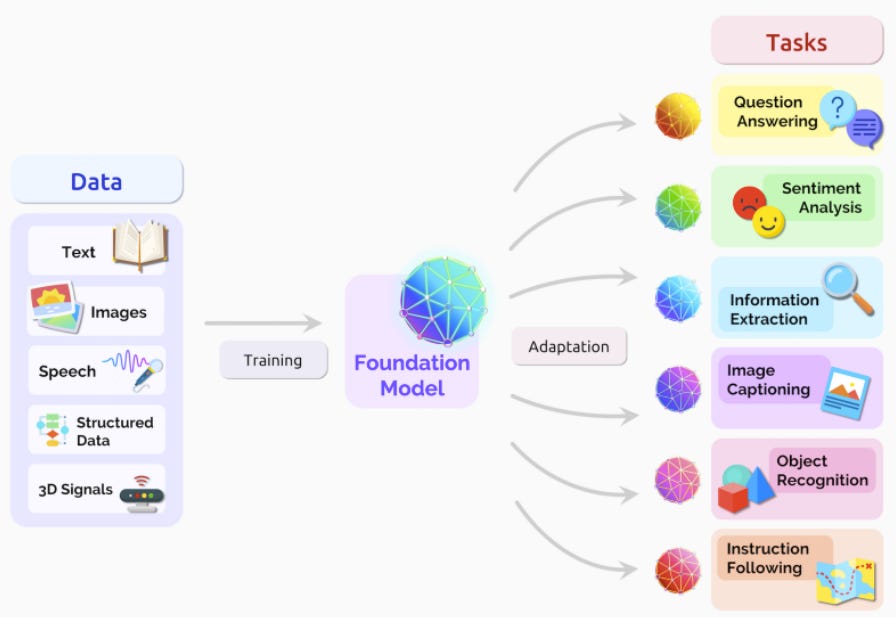

A large language model (LLM) is a type of language model notable for its ability to achieve general-purpose language understanding and generation. LLMs are deep learning algorithms that can recognize, summarize, translate, predict, and generate content using very large datasets

What are Zero-Shot, Few-Shot, and One-Shot Learning?

Zero-shot, one-shot, and few-shot learning are methods for machine learning predictions with limited labeled data.

One-shot: Uses one example for each new class. The goal is to understand and predict similar items based on that single sample.

Few-shot: Works with a few examples for a new class. It tries to recognize and predict items using these limited examples.

Zero-shot: Predicts for new classes without any direct examples. Instead, it taps into existing knowledge about related classes to understand and make predictions. This shows how LLMs can grasp and infer information even when direct data is missing.

Limitations of generic LLM

Limited domain understanding: LLMs trained on generic text may lack the specialized domain knowledge required for accurate sentiment analysis in specific industries or domains

Potential to generate nonsensical or harmful content: LLMs are general-purpose models, and while they can be adapted to new tasks, the process can be complex, and the results may not always be optimal

Bias: LLMs inherit the biases present in their training data, which can lead to the generation of biased or discriminatory answers

Limited knowledge: LLMs' knowledge is limited to concepts and facts that they explicitly encountered in the training data, and even with huge training data, this knowledge cannot be complete

Prompt Engineering

Prompt engineering involves refining text prompts to guide AI responses. It's a technique to improve the performance of large language models (LLMs) and the process of enhancing AI model inputs.

Key Aspects of Prompt Engineering:

Performance & Enhancement:

Improves the performance of LLMs.

Enhances AI model inputs.

Capabilities & Features:

Enabled by in-context learning, an emergent ability of LLMs.

Enhances AI services and results.

Boosts AI system usability and interpretability.

Security & Alignment:

Helps counter prompt injection attacks.

Aligns AI behavior with human intent.

Role of Professionals:

Use specific vocabulary to optimize AI outputs.

Ensure outputs are aligned with user goals.

Fine-Tuning Large Language Model

Fine-tuning LLMs can be highly beneficial for businesses looking to harness the power of AI. The definition is provided below.

Fine-tuning is taking a pre-trained model and training at least one internal model parameter (i.e. weights). - Shawhin Talebi

Fine-tuning LLMs can be more resource-efficient compared to training a language model from scratch, as it leverages the knowledge already captured by the model, saving time, computational power, and training data requirements.

For instance, comparing the outputs of davinci (base GPT-3 model) and text-davinci-003 (a fine-tuned model) reveals differences. The base model lists questions like a search query, whereas the fine-tuned model provides a more beneficial answer. The method used for text-davinci-003 is alignment tuning, designed to enhance the LLM's response quality. More details on that will follow.

Conclusion

Large Language Models (LLMs) have rapidly gained traction, as evidenced by their increasing popularity and adoption in tools like ChatGPT and Google's Bard. These models hold transformative potential for businesses, offering simplified tasks, cost-effectiveness, personalization, and heightened accuracy.

Fine-tuning, like prompt engineering, enhances LLMs, making them more resource-efficient and tailored to specific tasks compared to building models from scratch. This process harnesses the vast knowledge already embedded in the LLM, conserving time, computational resources, and training data needs.

In the competitive business landscape, the ability to derive precise, meaningful, and actionable insights from data is invaluable. LLMs, especially when fine-tuned, offer this edge. Companies that leverage the power of fine-tuned LLMs are not only streamlining operations but are also positioning themselves at the forefront of AI-driven innovation, ensuring sustained success in the ever-evolving marketplace.

Reference:

🌟 Spread the Curiosity! 🌟

Enjoyed "Curiosity Ashes"? Help ignite a wave of innovation and discovery by sharing this publication with your network. Let's foster a community where knowledge is celebrated and shared. Hit the share button now!